Are you curious about using AI models like Chat GPT, but have concerns about the privacy of your business and personal sensitive data? Well, you might be right to be worried, and maybe there is a solution that can help you! This article begins a multi-part series on the importance of privacy while using Artificial Intelligence. Keep reading to learn more.

Part 1: Introduction to LLMs and Privacy

In today's rapidly advancing technological landscape, Artificial Intelligence (AI) and Large Language Models (LLMs) have emerged as a game-changer in the field of Natural Language Processing (NLP) and Natural Language Generation (NLG). With the release of advanced AI models like OpenAI’s GPT 3.0, and later with GPT 4.0 and Google’s Gemini, they have gained significant popularity due to their text completion capabilities, which generates text that sounds human. However, the path to implementing AI and LLMs comes with two diverging philosophies: cloud-based company owned AI services and Local AI implementations. In this article we argue the pros and cons of each approach and why you might want to try Local AI if you have privacy concerns.

Understanding AI through Large Language Models (LLMs)

LLM stands for "Large Language Model", and it has become one of the

highest growing keywords since the start of 2023 with the release of GPT 3.0,

These generative models perform text

inference, or in other words, it provides a “probable” response to a text input

(not necessarily a correct or accurate response).

This type of AI model has become crucial in executing and automating tasks

related to Natural Language Processing (NLP), which refers to computers trying

to “understand” human language; or Natural Language Generation (NLG), which

refers to computers trying to create human-like text. As more people are learning

about their uses and how to mitigate their drawbacks, they will become more

prevalent in areas like program code generation, content creation, chatbots,

machine translations, and spelling corrections.

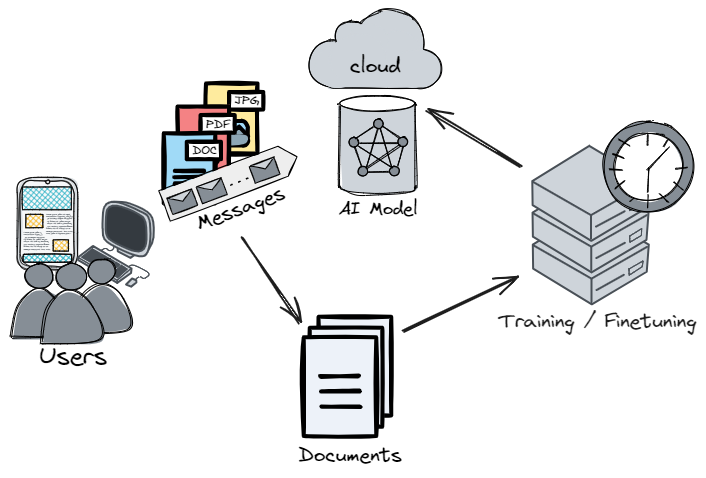

Currently, there are two diverging philosophies that surround generative AI.

Cloud LLMs, which are by far the most prevalent, refer to the act of running

large language models as a cloud based service to other companies or entities.

Usually, this is done through the SaaS model (Software as a Service), and some

big players in this space include OpenAI’s “Chat GPT”, Microsoft’s “Copilot”

and Google’s “Gemini”. These Cloud LLM services are convenient to most users

and allow integrations into other products, but the broad scale of their

implementation means that clients have little to no control over the models and

their capabilities.

Opposing this, there is the Local AI community. Local AI models generally

consist of openly accessible (open source) models that anyone can download and use

for their own purposes. This means that you can fine-tune (or “train”) them

with your own information to meet your specific needs. This gives you more

control over the AI uses and allows you to circumvent limitations imposed by

big-tech AI tools such as censorship. Additionally, as we will discuss next,

Local AI allows you to maintain better control over your personal and sensitive

data.

Why Privacy Matters in AI

As these Cloud-based AI and LLMs have grown, the data used to train them

becomes increasingly valuable to the companies that provide them. Therefore,

many times these companies enforce terms of service that include the right to

use clients' conversations to train their future models. This might not be a

concern for some people, but it is certainly important if you plan on using AI

models for processing company critical information such as contacts or deals.

As such, it is important to make sure no sensitive information is entered into

cloud service provided models without carefully reviewing their terms of

service, and even then, it is better to avoid it.

How do Local LLMs ensure better data privacy?

Many Local LLM models are open source, which allows you to download and use

them on your own computers, without the need to send your data to a big-tech

company. They are ideal for business solutions that might require to comply

strict privacy policies or if you simply feel more at ease avoiding privacy

concerns altogether. Instead of having to trust an external company with your personal

and sensitive data, you can keep your data with you, allowing you to use LLMs

to process confidential information directly in your automations

The use of big-tech cloud AI tools like Chat GPT in the workplace has only been

increasing, and with it, we will see an increased risk of employees sharing

confidential data through these tools. It is important to train employees on

the importance of privacy in their conversations with most cloud service models.

This can also be an incentive for companies to implement Local AI that their

employees can use safely, which would reduce the need for them to look for “free”

services like Chat GPT or Google's Gemini.

Conclusion

Artificial Intelligence is a realm that has grown over the

past months like no other technology due to its shocking ability to imitate inference

and human reasoning. It will play a big role in automating tasks that were

previously impossible to automate such as summarization, analyzing text, providing

customer support, and other solutions that leverage text generation and

processing. However, there are different implementations available: Cloud based

models provided as a service and self-managed Local AI models. Each has its own

advantages and disadvantages, and you must make an informed decision when it

comes to choosing an AI solution that suits your business and personal needs.

Therefore, understanding what tradeoffs you are making is crucial, and the

privacy concerns that cloud services bring with their terms of services could

be the deal breaker that pushes your business to self-manage their AI tools and

even train you own AI and Large Language Models.

However, before jumping into that, you need to make sure you understand how to

best fit the models into your process, which might require professionals

knowledgeable in development and who understand these new and always changing

AI technologies.

At Antemodal, we always try to stay up to date with the latest advances in technology and AI, so our clients can be sure we will implement great solutions that last. Contact us if you want to learn more about AI and how it can supercharge your business or personal goals!

Contact Antemodal Today

Discuss how our AI solutions can help you address privacy concerns and elevate your business or personal goals!